About once a year, I get the urge to push my programming skills and knowledge in a new direction. Some years, this results in some new (usually small) raytracers. Sometimes, it results in a program that plays a moderately good game of checkers. Other years, it results in code that predicts the orbits of satellites.

For quite some time, I’ve been pondering actually trying to develop some modest skills with GPU programming. After all, the images produced by state of the art GPU programs are arguably as good as the ones produced by my software based raytracers, and are rendered factors of a hundred, a thousand or even more faster than the programs that I bat out in C.

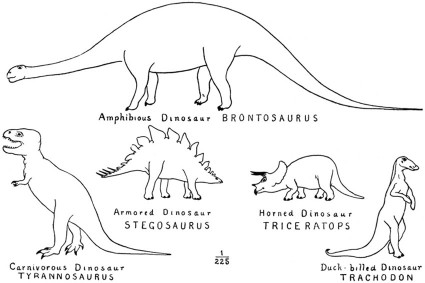

But here’s the thing: I’m a dinosaur.

I learned to program over 30 years ago. I’ve been programming in C on Unix machines for about 28 years. I am a master of old-school tools. I edit with vi. I write Makefiles. I can program in PostScript, and use it to draw diagrams. I write HTML and CSS by hand. I know how to use awk and sed. I’ve written compilers with yacc/lex, and later flex/bison.

I mostly hate IDEs and debuggers. I’ve heard (from people I respect) that Visual Studio/Xcode/Eclipse is awesome, that it allows to edit code and refactor, that it has all sorts of cool wizards to write the code that you don’t want to write, that it helps you remember the arguments to all those API functions you can’t remember.

My dinosaur brain hates this stuff.

By my way of thinking, a wizard to write the parts of code you don’t want to write is writing code that I probably don’t really want to read either. If I can’t keep enough of an API in my head to write my application, my application is either just a bunch of API calls, inarticulately jammed to together, or the API is so convoluted and absurd that you can’t discern it’s rhyme or reason.

An hour of doing this kind of programming is painful. A day of doing it gives me a headache. If I had to do it for a living, my dinosaur brain would make me lift my eyes toward the heavens and pray for the asteroid that would bring me sweet, sweet release.

Programming is supposed to be fun, not make one consider shuffling off one’s mortal coil.

Okay, that’s the abstract version of my angst. Here’s the down to earth measure of my pain. I was surfing over at legendary demoscene programmer and fellow Pixarian Inigo Quilez’s blog looking for inspiration. He has a bunch of super cool stuff, and was again inspiring me to consider doing some more advanced GPU programming. In particular, I found his live coding thing to be very, very cool. He built an editing tool that allows him to type in shading language code and immediately execute it. It seemed very, very cool. Here’s an example YouTube vid to give you a hint:

I sat there thinking about how I might write such a thing. I didn’t feel a great desire to write a text editor (I think I last did it around 1983) so my idea was simple: design a simple OpenGL program that drew a single quad on the screen, using code from a vertex/fragment shader that I could edit using good old fashioned vi. Whenever the OpenGL program noted that the saved version of these programs had been updated, it would reload/rebind the shader, and excecute it. It wouldn’t be as fancy as Inigo’s, but I figured I could get it going quickly.

While I have said I don’t know much about GPU programming, that strictly speaking isn’t true. I did some OpenGL stuff recently, using both GLSL and even CUDA for a project, so it’s safe to say this isn’t exactly my first rodeo. But this time, I thought that perhaps I should do it on my Windows box. After all, Windows probably still has the best support for 3D graphics (think I) and it might be of more use. And besides, it would give me a bit broader skill base. Not a bad thing.

So, I downloaded Visual Studio 2010. And just like the Diplodocus of old, I began to feel the pain, slowly at first, as if some small proto-mammals were gnawing at my tail, but slowly growing into a deep throbbing in my head.

On my Mac or my Linux box, it was pretty straightforward to get OpenGL and GLUT up and running. Being the open source guy that I am, I had MacPorts installed on my MacBook, and a few judicious apt-get installs on Linux got all the libraries I needed. On the Mac, the paths I needed to know were mostly /opt/local/{include/lib} and on the Linux box, perhaps /usr/local/{include/lib}. The same six line Makefile would compile the code that I had written on either platform if I just changed those things.

But on Windows, you have this “helpful” IDE.

Mind you, it doesn’t actually know where any of these packages you might want to use live. So, you go out on the web, trying to find the distributions you need. When you do find them, they aren’t in a nice, self-installing format: they just naked zip files, usually with 32 bit and 64 bit versions, and without even a good old .bat file to copy them to the right place for you. On Mac OS X/Linux, I didn’t even need to know if I was running 64 bit or 32 bit: the package managers figured that out for me. On Windows, the system with the helpful IDE, I have to know that I need to copy the libs to a particular place (probably something like \Program Files(x86)\Microsoft SDKs\Windows\v7.0A\Lib) and the include files to somewhere else, and maybe the DLLs to a third place, and if you put a 32 bit DLL where it expected a 64 bit one (or vice versa) you are screwed. But even after dumping the files in those places, you still have to configure your project wizard to add these libraries, by tunneling down through the Linker properties, under tab after tab. Oh, and these tabs where you enter library names like freeglut32.lib? They don’t even bring up the file browser, not that you would really want to go grovelling around in these directories anyway, but at least there would be a certain logic to it.

And then, of course, you can start your Project. Go look up a tutorial on doing a basic OpenGL program in Visual C++, and they’ll tell you to use the Windows Wizard to create an empty project: in other words, all that vaunted technology, and they won’t even write the first line of code for you for your trouble.

After all this, I got to the point where I could hit F5 to compile, and what happened? It failed to compile my simple (and proven on other operating systems) code, with the message:

Application was unable to start correctly (0xc000007b)

You have got to be kidding me. When did we transport ourselves back to 1962 or so when a numerical error code might have been a reasonable choice? If your error code can’t tell you a) what is wrong and b) how to fix it, it’s absolutely useless. You might as well just crash the machine.

This was the start of hour three for me. And it was the end of Visual Studio. I must compliment Microsoft on their uninstaller: it worked perfectly, the first actual success of the day.

Undoubtedly some of you out there are going to proclaim that I’m stupid (probably true) or inexperienced with it (certainly true) and that if I just kept at it, all would be well. These are remarkably similar to the claims that I’ve heard from other religions, and I have no reason to believe that the “Faith of Video Studio” will turn out any better than any of the other religions.

Perhaps before you post, you should just consider that I warned you that I was a dinosaur when I began, and perhaps I was right, at least about that.

Luckily, along with this project, I’ve been thinking of another dimension along which I could develop some new skills, and it turns out that this stuff appears to be more in line with my dinosaur sensibilities, and that’s the Go Programming Language.

Go has some really cool ideas and technology inside, but at it’s core, it’s simply seems better to my dinosaur brain. The legendary Rob Pike does a great job explaining it’s strengths, and almost everything he says about Go resonates with me and addresses the subject of the long rant above. Here’s one of his great talks from OSCON 2010:

I know it will be hard to get my mind wrapped around it, but I can’t help but think it will be a more pleasant experience than the three hours I just spent.

I know the GPU thing isn’t going to go away either. I just kind of wish it would.