This is a math/geeky/computer post of something relatively simple, but it arose in the wild in a program that I wrote several years ago, and when I saw it again, it confused me, and I spent a bit of time thinking about it, and I thought I might just write it up. If math/geeky/computer stuff bores you, perhaps you should move on.

If I’ve still got you, today’s post is about combinations, a topic that arises in lots and lots of computer programs. For instance, the program that spawned this post was this program for computing the number of possible positions in a game of checkers that I did back in 2009. It uses a function called “comb” which takes two arguments a and b, which computes the number of different ways of selecting b objects from a collection of a objects. Here’s the implementation from that post:

[sourcecode lang=”python”]

def fact(n):

if n == 0:

return 1

else:

return n*fact(n-1)

def comb(a, b):

return fact(a) / (fact(b)*fact(a-b))

[/sourcecode]

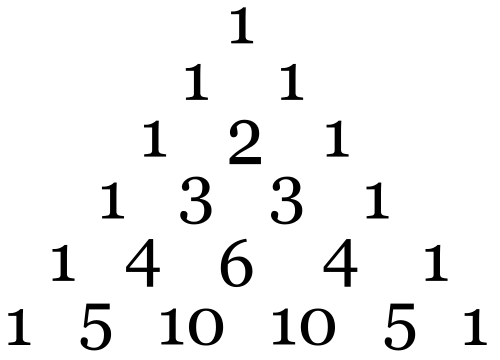

If you’ve done a lot of programming, this probably seems fairly obvious to you. This is one of those formulas that I don’t have to think about: I learned it so long ago that I don’t even remember how I originally learned it. It might have been when I first learned about Pascal’s Triangle back in grade school. I probably learned the formula to compute these coefficients using the factorial function, and I’ve probably used it dozens and dozens of times in various contexts. But really, when I thought about it, the factorial function doesn’t seem to me to be completely obvious when staring at the triangle. When I first learned about Pascal’s triangle, the rules were something like this. First, write down a at the top, then write a bunch of rows underneath it, each row containing one more number so they form a triangle. The first and last number in each row needs to be a one. The other entries are the sum of the numbers above and to the left and above and to the right. If you think of the number of path starting at the root, and reaching the desired new node, it’s clear that it’s the sum of the number of paths reaching the node above and to the left, and above and to the right.

Pascal’s Triangle

Which brings me to a different chunk of code. In the posting above, I had mentioned that I had written a program to compute the number of possible checkers positions before, but had lost the code, causing me to write the code above. Butr recently I found that original code. But this code didn’t contain the factorial/comb implementation above. It had code that looked like this.

[sourcecode lang=”python”]

def c(a, b):

if b == 0 or b == a:

return 1

r = c(a-1, b-1) + c(a-1, b)

return r

[/sourcecode]

At first, this seemed less familiar to me. But if you think about Pascal’s triangle, this is exactly what I described above. If you think of trying to compute the bth number in the ath row, it’s just the sum of the numbers in the row above, to the left and right.

And this works! If you compute c(52, 5), you can verify that there are 2598960 ways to deal a 5 card poker hand from a single deck. In a way, this code seems more elegant to me: what was with all that multiplication and division stuff up above? But there is a problem: performance.

A moment’s thought should reveal why that should be the case. This code only returns 1, or the sum of two other terms. That means to come up with the 2598960, it bottoms out that recursion… 2598960 times. Modern computers can count that high, even by ones, even in python rather quickly. It took about 1.4s to compute. But to compute c(52, 6) takes about 7 times longer (because the number is roughly 7x larger). Computing c(52,13) is out of the question with that code.

But my original program computed the number of combinations this way, and was reasonably efficient. What gives?

Well, I lied. The code in my npos program wasn’t exactly like that. Here’s the complete code:

[sourcecode lang=”python”]

#!/usr/bin/env python

#

# some programs to compute the number of legal positions in checkers

#

import locale

locale.setlocale(locale.LC_ALL, "")

mem = {}

def c(a, b):

if b == 0 or b == a:

return 1

if mem.has_key((a, b)):

return mem[(a, b)]

r = c(a-1, b-1) + c(a-1, b)

mem[(a, b)] = r

return r

def num(b, bk, w, wk, f):

r = c(4,f)*c(24,b-f)*c(28-(b-f),w)*c(32-b-w,bk)*c(32-b-w-bk,wk)

return r

def np(n):

total = 0

for b in range(min(n, 12)+1):

for bk in range(min(n, 12)-b+1):

for w in range(min(n-b-bk, 12)+1):

for wk in range(min(n-b-bk, 12)-w+1):

for f in range(min(b, 4)+1):

total += num(b, bk, w, wk, f)

return total – 1

tot = {}

for n in range(25):

tot[n] = np(n)

maxnew, maxtot = 0, 2**64-1

for n in range(1, 25):

new = tot[n] – tot[n-1]

newstr = locale.format("%d", new, True)

totstr = locale.format("%d", tot[n], True)

maxnew = max(maxnew, len(newstr))

maxtot = max(maxtot, len(totstr))

print " N%s" % "possible positions".center(maxnew)

print (maxnew+3)* ‘-‘

for n in range(1, 25):

new = tot[n] – tot[n-1]

newstr = locale.format("%d", new, True)

print "%2d %s" % (n, newstr.rjust(maxnew))

print (maxnew+3)* ‘-‘

totstr = locale.format("%d", tot[24], True)

[/sourcecode]

Can you spot the difference? The c function is memoized. The vast majority of invocations of the function have been evaluated once before. So, this code remembers the value of each invocation, and before computing, looks up the result to see if we’ve computed it before. An obvious way to implement this lookup would be as a two dimensional array, but Python’s dictionary types are quick and easy. When the program is run, it stores stores 306 values. With this added, the entire program, which counts 500,995,484,682,338,672,639 positions executes in just about 1 second on my laptop. And while it does rely on bignum arithmetic, it doesn’t require multiplication or division: just addition works fine.

I like it.

Hi Mark,

I enjoyed this post. It is quite timely, as I am reading SICP (finally) and am in the early section (1.2.2) that discusses tree recursion and introduces memoization. As a result I recognized it (and could name it.) In a month or two that may not be true anymore.

73

Matthew