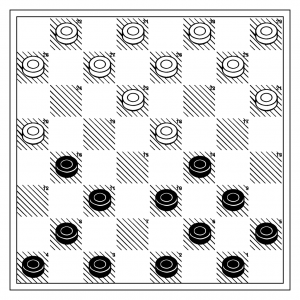

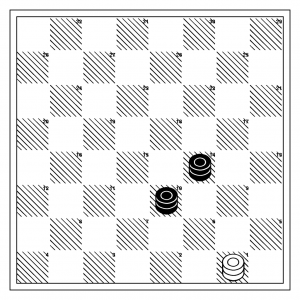

So, I’ve spent a few hours over the last couple weeks to try to debug milhouse, my computer checkers program that I tinkered together over the last few years. It’s kind of maddening, because in so many ways it seems to work, but it has a number of rather maddening behaviors which can only be classified as bugs. Here’s an example. Consider this simple 2 K vs. 1K endgame (entirely typical, and the kind of endgame that I can even do).

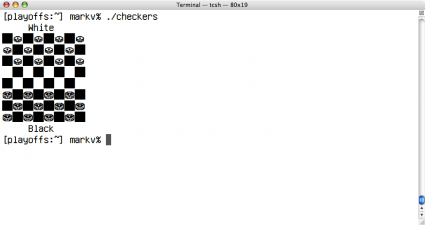

It’s easy, right? Sure enough, Milhouse finds the 14 move sequence that basically forces the White king to flee the double corner, where disaster awaits. The search is very quick, taking just a fraction of a second.

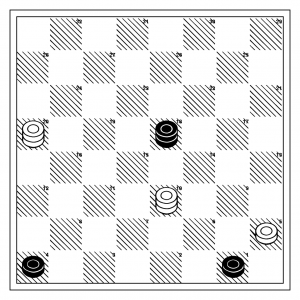

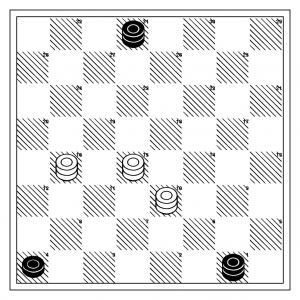

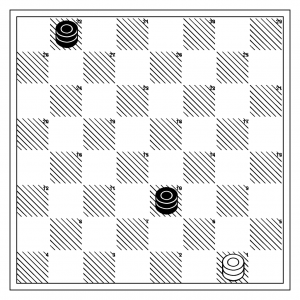

But here’s the thing: nominally more difficult puzzles seem to confuse milhouse entirely. Let’s modify the puzzle to make the winning sequence just a bit longer.

Obviously, this is just 8 half moves (or plies) away from the previous one. (You can see that if White does anything but jigger back and forth, he does nothing but hasten his own demise.) But for some odd reason, a 25 ply search doesn’t discover this winning line.

So, here’s the thing: alpha-beta search is a lot like binary search. To write a bug free binary search is remarkably challenging. Try it sometime. There are all sorts of odd edge conditions you can get wrong if you aren’t careful. Jon Bentley uses it as the prototypical example of how to develop and debug software in his classic work Programming Pearls. And alpha-beta search is 10x worse, for a number of reasons:

- The algorithm has the same sort of hidden subtlety that binary search does. There are many edge conditions (is the invariant that alpha < beta? alpha <= beta? do cutoffs occur when score is > alpha? or >= alpha?) that aren’t consistently described in the classic literature on algorithms.

- It operates on (typically) very large search trees. It’s hard to find bugs by examining the search tree because of its raw size, and because many minor problems (such as the comparison issues above) are effectively hidden because they often don’t affect the outcome of searches.

- There is lots of information about alpha beta search on the web, but almost all available sources suffer from poorly described invariants, and often don’t implement the necessary complications (such as transposition tables) which further complicate the design.

- If you do find code that does work and is non-trivial, you can’t understand it. Game programmers love to “optimize” code, which means that all useful patterns and structure have been abstracted out of the program, using only a helpless hodge podge.

Okay, I’m just bitching. I’ve got 99% of this code right, but the 1% is causing me grief.

Addendum: Oh, and here’s another interesting thing. You might remember from a previous blog posting that I counted the number of possible checkers positions for each number of pieces. If you look at the table, you’ll find that there are about a quarter of a million positions with three pieces or less. My checkers program can compute about twenty five million positions a second, yet I’m not finding the winning search. It’s not too hard to imagine what the problem is. Let’s assume that the branching factor for this problem is roughly eight. If the winning line is 25 ply long, the full search tree is 825 nodes. That’s 3.7E22 positions. Even with alpha-beta searching, that’s a huge number of nodes. This is where transposition tables are supposed to come in. There are many paths that lead toward equivalent positions, so there is no reason to research the same trees over and over again. So, you maintain a table that records the outcomes of previous searches, and if the same position shows up, you already know the answer and don’t have to search.

Obviously, mine isn’t working too well. If it were, I’d expect to quickly expand the search perimeter to find nodes I hadn’t seen before, and locate the winning line.