it seems like the last month has been rife with stories of corporations doing things that annoy and irritate their customers. Facebook privacy concerns. Google sniffing Wi-Fi. Apple rejecting apps for inscrutable reasons. Heck, BP not checking their blow-out preventer.

Each of these have caused me a bit of annoyance: some perhaps more than they should, some less than they should. But the subject of today’s rant is AT&T’s New Lower-Priced Wireless Data Plans. Their own press release says that customers can choose between two new more affordable plans: “either a $15 per month entry plan or a $25 per month plan with 10 times more data.”

Wow, sounds great huh?

Well, for some people it probably is. Maybe even for me. Let’s look at my data usage over the last few months:

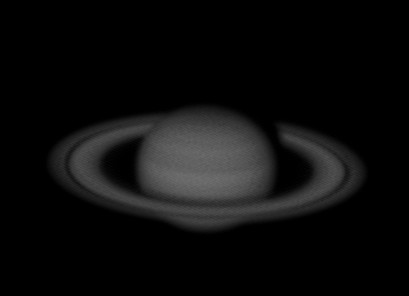

My Data Usage Over the Last Few Months

As you can see, I’m probably safe in the 2GB usage pattern, so I could in theory sign up for the 2GB data plan for $25 and save myself $5 a month. So why am I unhappy?

Three things:

First of all, there are overages. Cell phone companies love to charge you overages. Let’s say that you sign up for the DataPlus plan, and use 201 megabytes in a month, instead of 199 megabytes. AT&T will nicely charge you $15 a month extra for the next 200. And $15 for the next one after that. Let’s say you have a bad month, like I did, and you use 1GB of transfer. AT&T will charge you $75 for that 1GB. If you just signed up for their DataPro plan, you would be charged only $25, and you’d get twice the data for that. It’s not like they have to pay some human overtime to come in and move your data around: the data is already delivered. They could charge you less, but they choose to charge you more, to entice you to do what I do, which is to sign up for a more expensive plan as a hedge against large overages. My current unlimited data plan is the best kind of hedge: a fixed rate plan. I typically use about 1/4 of what the 2GB limit would give me, but I don’t have to worry: if I need the bandwidth, it’s there. With the new proposed plan, I have no such guarantee, meaning I have to watch my usage more carefully, which is an added mental annoyance that I didn’t have before.

While I’m on this kick, here’s another pet peeve about overages. The phone company knows how many minutes you’ve used. They know how much data that you’ve used. They could just give you the option of having your service stop when you reach your limit. Previously, this was declared “impossible” by AT&T, with the net result that in a fit of teen… shall we say… indiscretion my son managed to run up a $700 cell phone bill by exceeding his minutes. Now, AT&T has the mechanism in place, but will charge you $4.99 for that privilege. That’s just extortion.

The second thing that annoys me is that AT&T is finally offering cell phone tethering. “What’s annoying about that, you ask?” Well, previously you couldn’t get tethering on the iPhone from AT&T, but today, they annouced that you can get it for $20. And for that… you get…. well, pretty much nothing. Yes, you can hook your laptop to the network via your iPhone, but you don’t get any extra bandwidth. AT&T is charging you more for the bits you sent from your laptop, based solely on their point of origin. Sure, they might reasonably expect that users who make use of tethering will use their data connections more, but they already are going to shaft you when you hit your overages anyway. That’s what those overages are meant to deter. To spend an extra $20 on top of that seems absurd.

Lastly of course, the sizing of these plans may be adequate today, but as network speeds improve (well, on OTHER cellular networks anyway) and as the demand for more bandwidth from applications like video grows, these plans will grow increasingly burdensome for more and more consumers. Which, of course, AT&T will be happy to charge you for, as you rack up more overages.

I get the motivation: there are people out there who use many times even my usage, and pay no more than I do. I’ve heard that 3% of cell phone users account for 40% of all data transmitted in the Bay Area (read it somewhere today, didn’t save the link, but even if the number is wrong, it’s probably not very wrong). Obviously, AT&T would love to get those people off the network, or at least lower their usage, because then the network behaves as if they upgraded it: they have more available bandwidth that they can sell to more customers. Heck, I’m not even really objecting to the pricing: it’s a powerful incentive to lower usage of the 3%, while actually lowering my bill MOST of the time by $5 a month. But cell phone companies already have a lot of hostile practices in place that are bad for consumers. They charge a fortune for text messages, which is idiotic. They limit voice minutes, and place no real limit on overages. They charge you for early termination. Activation. Enough. We love our cell phones, we want to use your product, but you guys have to toss us a bone once in a while.

I suspect that it might be better to pick up an iPod touch and run Skype most of the time, and get a Pay As You Go cell phone to keep in my car for emergencies. I’d probably save $400 a year on cell phone bills, and it would piss me off less.

This concludes the rant of the day.