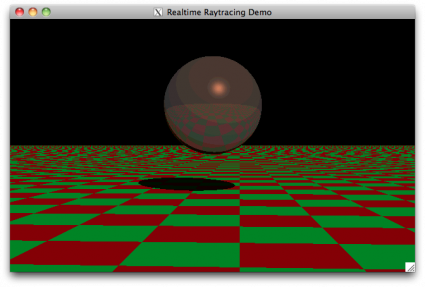

Back in 2000, I was intrigued by the various demos that I saw which attempted to implement real time raytracing. I wondered just what could be done with the computers I had on hand, without using any real tricks, but just a straightforward implementation of ray/sphere and ray/patch intersection. As I recall, I got two or three frames a second back then. I dusted off this code, and recompiled it for my MacBook (it uses OpenGL/GLUT, so it should be reasonably portable) and now it actually runs pretty reasonably. The code isn’t completely horrific, and might be of some use to someone as an example. It’s not clever, just straightforward and relatively easy to understand.

RTRT3, a tiny realtime raytracer

Addendum: I recompiled this on some of our spiffy machines at work, and immediately encountered a bug: there is nothing really to prevent infinite recursion. It’s possible (especially when the sphere and plane are touching) for a ray to get “stuck” between the sphere and plane. The simplest cure is to just keep track of how deep the recursion is by passing a “level” variable down through the intersect routines, and return FALSE for any intersection which is “too deep” (say, beyond six reflections deep). When modified thusly, I get about 96 FPS on my current HP desktop machine, running on one core. It has 12, incidently.

I couldn’t compile this on my OSX install with your makefile, so this is what I had to do:

Change the #include to #include in rtrt3.c

and compile and link with: gcc -framework GLUT -framework OpenGL -framework Cocoa -lm rtrt3.c -o rt3rt3

Then it works – might have a fiddle with the code and see how I can break it…